State-of-the-Art Innovations

to Prevent Financial Risk

The Feedzai Research department invests in applied research to improve our products and help users have a better experience. We work closely with Product and Customer Success to develop and transfer innovations. We focus on long-term, disruptive, state-of-the-art research, produce and protect our IP, publish peer reviewed work, contribute to open-source, partner with external researchers, and sponsor scholarships.

Recent Publications

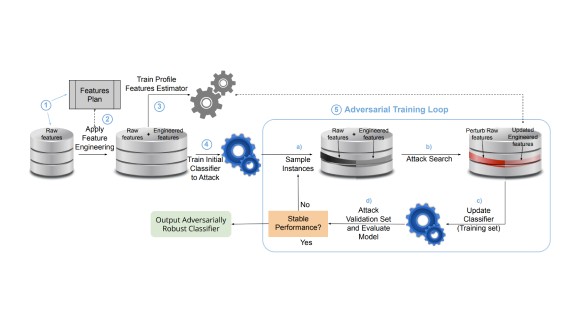

Adversarial training for tabular data with attack propagation

Published at KDD Workshop on Machine Learning in Finance 2023

Latest News

Paper accepted at CLeaR 2024

Paper accepted at AAAI-24

FiFar dataset release

Three papers presented at ICAIF, the ACM International Conference on AI in Finance

AutoVizuA11y is available in open-source

The Feedzai Women Science Scholarships at Técnico awards

Recent Blog Posts

AML Reimagined: LaundroGraph Exploits Graph Structure to Assist Anti-Money Laundering Activities

We introduce LaundroGraph, a self-supervised system based on graph-neural networks to assist experts during anti money laundering

Mário Cardoso

TimeSHAP: Explaining recurrent models through sequence perturbations

Recurrent Neural Networks (RNNs) are a family of models used for sequential tasks, such as predicting financial fraud based on customer behavior. These models are very powerful, but their decision processes are opaque and unintelligible to humans and rendering them black boxes to humans. Understanding how RNNs work is imperative to assess whether the model is relying on any spurious correlations or discriminating against certain groups. In this blog post, we provide an overview of TimeSHAP, a novel model-agnostic recurrent explainer developed at Feedzai. TimeSHAP extends the KernelSHAP explainer to recurrent models. You can try TimeSHAP at Feedzai’s Github.

Joao Bento, André Cruz, Pedro Saleiro

Understanding FairGBM: Feedzai’s Experts Discuss the Breakthrough

Feedzai recently announced that we are making our groundbreaking FairGBM algorithm available via open source. In this vlog, experts from Feedzai’s Research team discuss the algorithm’s importance, why it represents a significant breakthrough in machine learning fairness beyond financial services, and why we decided to release it via open source.

Pedro Saleiro

AML Reimagined: LaundroGraph Exploits Graph Structure to Assist Anti-Money Laundering Activities

We introduce LaundroGraph, a self-supervised system based on graph-neural networks to assist experts during anti money laundering

Mário Cardoso

TimeSHAP: Explaining recurrent models through sequence perturbations

Recurrent Neural Networks (RNNs) are a family of models used for sequential tasks, such as predicting financial fraud based on customer behavior. These models are very powerful, but their decision processes are opaque and unintelligible to humans and rendering them black boxes to humans. Understanding how RNNs work is imperative to assess whether the model is relying on any spurious correlations or discriminating against certain groups. In this blog post, we provide an overview of TimeSHAP, a novel model-agnostic recurrent explainer developed at Feedzai. TimeSHAP extends the KernelSHAP explainer to recurrent models. You can try TimeSHAP at Feedzai’s Github.

Joao Bento, André Cruz, Pedro Saleiro

Understanding FairGBM: Feedzai’s Experts Discuss the Breakthrough

Feedzai recently announced that we are making our groundbreaking FairGBM algorithm available via open source. In this vlog, experts from Feedzai’s Research team discuss the algorithm’s importance, why it represents a significant breakthrough in machine learning fairness beyond financial services, and why we decided to release it via open source.

Pedro Saleiro

Research Areas

AI Research

The AI group has a mission of building the next-gen RiskOps AI to safeguard businesses and people from fraud and financial crime that is responsible and explainable by design.

Learn More

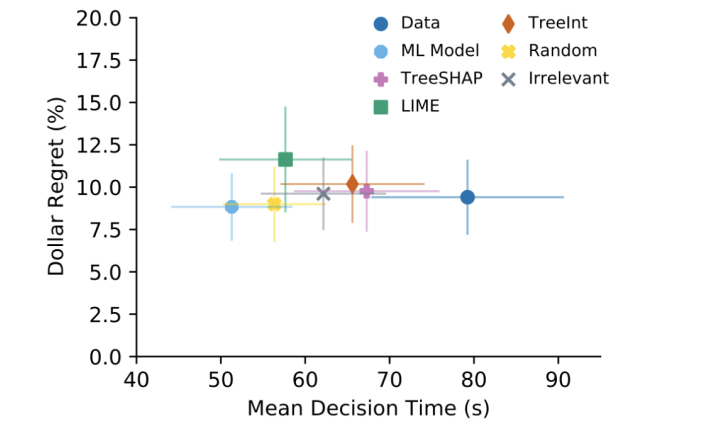

Data Visualization

The Data Visualization group aims to better elucidate complex data for Fraud Analysts & Data Scientists with insightful beautiful data experiences.

Learn More

Systems Research

The Systems Research group aims to enhance performance & reliability of the RiskOps Platform through innovation in a number of key areas.

Learn More

AI Research

The AI group has a mission of building the next-gen RiskOps AI to safeguard businesses and people from fraud and financial crime that is responsible and explainable by design.

Learn More

Data Visualization

The Data Visualization group aims to better elucidate complex data for Fraud Analysts & Data Scientists with insightful beautiful data experiences.

Learn More

Systems Research

The Systems Research group aims to enhance performance & reliability of the RiskOps Platform through innovation in a number of key areas.

Learn More

Page printed in 27 Apr 2024. Plase see https://research.feedzai.com for the latest version.